|

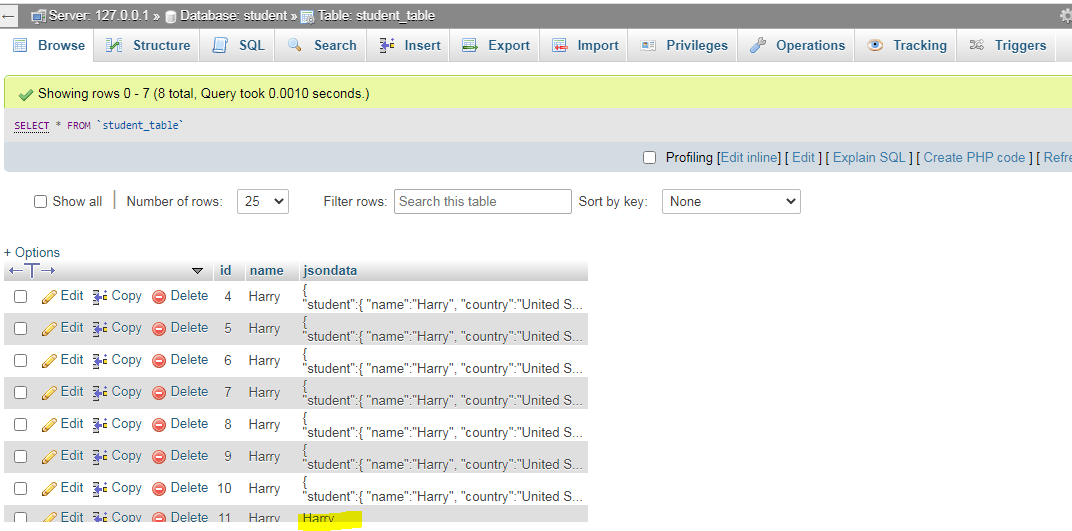

5/30/2023 0 Comments Redshift data types json In reality, there are other dimensions of aggregation, and some analyses of this table will need to re-aggregate the values shown in the table above. How can I re-aggregate this kind of SUPER column, while retaining the SUPER structure? Until now, I've discussed a simplified example which only aggregates by user. I understand that this is because the preferred approach is to load SUPER data but convert it to columnar data as soon as possible. by using json_parse), but never discuss the case where this data is generated from another Redshift query. This requires us to pre-create the relational target data model and to manually map the JSON elements to the target table columns. We can convert JSON to a relational model when loading the data to Redshift ( COPY JSON functions ). We have three options to load JSON data into Redshift. Update the following the environment parameters in cdk. How can I insert into this kind of SUPER column from another SELECT query? All Redshift docs only really discuss SUPER columns in the context of initial data load (e.g. Redshift offers limited support to work with JSON documents. 3) Name of column This is the name of JSON data column which was we have using with JSON function to retrieve data from table. But I haven't quite been able to achieve what I'm hoping to, mostly due to difficulties populating and aggregating the SUPER column. So this is the approach I've been trying to implement. By using JSON for storage, you might be able to store the data for a row in key:value pairs in a single JSON string and eliminate the sparsely-populated table columns. For example, suppose you have a sparse table, where you need to have many columns to fully represent all possible attributes, but most of the column values are NULL for any given row or any given column. If the data already existed as SUPER in the Redshift table, these latter 2 queries would work. use a search engine to search for parsing the SUPER data type.

Because JSON strings can be stored in a single column, using JSON might be more efficient than storing your data in tabular format. I have tried playing around with the Redshift JSON functions, but without being able to write functions/use loops/have variables in Redshift, I really cant see a way to do this.

Type of data also imposes a restriction on the dataset which can be ingested in a system, which maintains the sanctity of the data. This, in turn, allows a user or a system to handle a wide range of use cases.

When you need to store a relatively small set of key-value pairs, you might save space by storing the data in JSON format. Note the following considerations of SUPER configurations when you use Amazon Redshift SUPER data type and PartiQL. Redshift supports ingestion/inserting of many different data types onto the warehouse. Here, the string is the JSON representation of the data. This also pretty much exactly matches the intended SUPER use case described by the AWS docs: If the value associated with a key is a complex Avro data type such as byte, array, record, map, or link, COPY loads the value as a string.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed